What are Common Natural Language Processing (NLP) Tools?

Natural Language Processing (NLP) tools are software components, libraries, or frameworks designed to facilitate the interaction between computers and human language. These tools harness the power of computational linguistics and machine learning to analyze, interpret, and generate human-like text, enabling various applications across various domains.

Here are some classic NLP tools:

- Tokenization Tools: Tokenization tools break down a text into smaller units called tokens, words, phrases, or sentences.

- NLTK (Natural Language Toolkit), SpaCy, and the Stanford NLP Tokenizer.

- Part-of-Speech Tagging Tools: These tools assign parts of speech (e.g., nouns, verbs, adjectives) to each word in a sentence.

- NLTK, SpaCy, and Stanford NLP provide robust part-of-speech tagging capabilities.

- Named Entity Recognition (NER) Tools: NER tools identify and categorize entities (e.g., persons, organizations, locations) within a text.

- SpaCy, NLTK, and Stanford NLP include NER components.

- Text Classification Tools: Text classification tools categorize or label text into predefined classes or categories.

- Scikit-learn, TensorFlow, and PyTorch are widely used for building text classification models.

- Sentiment Analysis Tools: Sentiment analysis tools determine the sentiment expressed in a text, such as positive, negative, or neutral.

- VADER (Valence Aware Dictionary and sEntiment Reasoner), TextBlob, and machine learning-based models.

- Machine Translation Tools: These tools automatically translate text from one language to another.

- Google Translate, OpenNMT, and Marian NMT.

- Text Summarization Tools: Text summarization tools generate concise summaries of longer pieces of text.

- Gensim, Sumy, and BART (Bidirectional and Auto-Regressive Transformers).

- Speech Recognition Tools: Speech recognition tools convert spoken language into written text.

- Google Speech-to-Text, Sphinx, and DeepSpeech.

- Chatbot Development Tools: These tools aid in creating and deploying chatbots for conversational interfaces.

- Rasa, Microsoft Bot Framework, and Dialogflow.

- Dependency Parsing Tools: Dependency parsing tools analyze the grammatical structure of sentences to identify relationships between words.

- SpaCy, Stanford NLP, and NLTK offer dependency parsing capabilities.

- Topic Modeling Tools: Topic modelling tools identify topics within a collection of text documents.

- Latent Dirichlet Allocation (LDA) implementations, Gensim, and Mallet.

These NLP tools are crucial in advancing language understanding, enabling applications ranging from search engines and virtual assistants to sentiment analysis and language translation. Researchers and developers leverage these tools to build sophisticated language models and applications across diverse industries.

Top 6 NLP Tools: Libraries & Frameworks

1. NLTK (Natural Language Toolkit) [Python Based NLP Tools]

- One of the oldest and most widely used NLP libraries.

- A comprehensive toolkit for text processing tasks, including tokenization, stemming, and part-of-speech tagging.

- Extensive collection of corpora, linguistic resources, and pre-trained models for various NLP applications.

- It is widely employed in academia and industry for research and development.

2. SpaCy [Python Based NLP Tools]

- Known for its efficiency and speed in NLP tasks.

- Emphasizes production-level capabilities, making it suitable for real-world applications.

- Provides tokenization, named entity recognition (NER), and syntactic parsing with pre-trained models.

- Famous for its ease of use and integration with deep learning frameworks.

Synthax analysis in spaCy

3. Stanford NLP

- Offers a suite of NLP tools and models for various linguistic analysis tasks.

- Robust solutions for part-of-speech tagging, named entity recognition, and sentiment analysis.

- It is well-suited for research and development due to its high-quality linguistic resources.

- They are widely used in both academic and industrial NLP projects.

4. Scikit-learn [Python Based NLP Tools]

- Although a general-purpose machine learning library, Scikit-learn offers robust tools for text analysis and feature extraction.

- They are widely used for tasks like text classification, clustering, and dimensionality reduction.

- Provides a simple and consistent interface for working with text data.

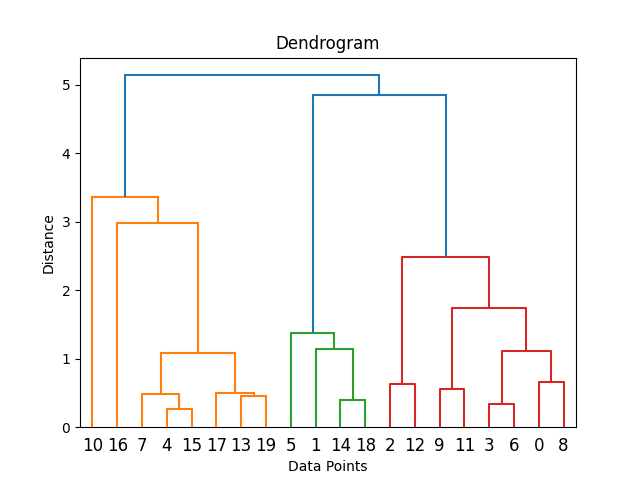

A dendrogram showing the results of a hierarchical clustering example in Python using scikit-learn and matplotlib

5. TensorFlow and PyTorch

- General-purpose deep learning libraries that are extensively used in NLP.

- TensorFlow’s high-level API (tf.keras) and PyTorch’s dynamic computation graph make them popular choices for building NLP models.

- Support for implementing complex neural network architectures used in natural language processing.

6. Gensim [Python Based NLP Tools]

- Specialized library for topic modelling and document similarity analysis.

- Implements algorithms like Word2Vec for word embeddings and doc2vec for document embeddings.

- They are widely used in applications requiring semantic analysis and document clustering.

Cosine similarity is often used with word embeddings for document retrieval.

Top 4 NLP Tools [Libraries & Frameworks] For Large Language Models (LLMs)

1. Hugging Face Transformers

- A leading platform for sharing, discovering, and training transformer-based models.

- Houses a vast collection of pre-trained models, including BERT, GPT, and more.

- Allows seamless integration of state-of-the-art models into various NLP applications.

- Known for its user-friendly API and community-driven model development.

2. LangChain

- LangChain provides a clean interface for integrating LLMs into applications using Python.

- Simple APIs for text generation, classification, QA, search, translation

- Chaining prompts for complex workflows.

- Tools to load data and fine-tune models.

- Extraction of model embeddings.

- Explainability of model behaviour.

- Modular backend connectivity to LLMs.

3. LlamaIndex

- LlamaIndex creates vector indexes of text for ultra-fast semantic search using LLM embeddings.

- Indexing millions of text passages as vectors.

- Semantic text search via vector similarity.

- Support for 8+ open-source embedding models.

- Cloud-native deployment on Kubernetes.

- Python and REST APIs for integration.

4. Haystack

- Haystack provides an end-to-end pipeline for building document search interfaces using LLMs.

- Multi-language document store integrations.

- Text vectorization using models like sentence-transformers.

- Scalable indexes for vector similarity search.

- REST APIs and UI components for search interfaces.

- Extractive QA to find answer passages.

Deep Dive into More Complex Natural Language Processing (NLP) Models Used By The Top Tools

Word Embeddings (Word2Vec, GloVe)

Word embeddings have revolutionized natural language processing (NLP) by providing a mechanism to represent words as continuous vector spaces. These embeddings capture semantic relationships between words, enabling machines to understand and process language more nuancedly.

1. Word2Vec: Word2Vec is a popular word embedding technique developed by Google. It employs neural networks to learn distributed representations of words based on their context in a given corpus. Two primary models within Word2Vec are Skip-gram and Continuous Bag of Words (CBOW).

- Skip-gram: In the Skip-gram model, the algorithm predicts the context words given a target word. This helps capture a word’s semantic meaning by considering its surrounding context.

- Continuous Bag of Words (CBOW): Contrastingly, CBOW predicts the target word based on its context. It is adequate for representing the syntactic structure of words.

Word2Vec embeddings find applications in various NLP tasks:

- Semantic Similarity: Capturing relationships between words and determining similarity.

- Analogies: Solving analogies like “king – man + woman = queen.”

- Named Entity Recognition (NER): Enhancing context awareness in NER systems.

2. GloVe (Global Vectors for Word Representation): GloVe is another influential word embedding technique focusing on global word co-occurrence statistics. It constructs an embedding matrix by analyzing the frequency of word co-occurrence in a large corpus.

GloVe vector example, “king” is to “queen” as “man” is to “woman.”

Advantages:

- Syntactic and Semantic Information: GloVe embeddings inherently capture syntactic and semantic relationships.

- Linear Relationships: Vector operations like addition and subtraction can represent relationships between words.

Practical Applications:

- Document Analysis: Enhancing document similarity and clustering based on word relationships.

- Sentiment Analysis: Improving sentiment analysis models by leveraging semantic embeddings.

- Language Translation: Assisting in the development of more context-aware translation systems.

Challenges and Considerations:

- Contextual Limitations: Both Word2Vec and GloVe may struggle with polysemy (multiple meanings of a word) and capturing context nuances.

- Static Representations: Word embeddings are fixed and do not adapt to changes in meaning over time or context.

Word embeddings, particularly Word2Vec and GloVe, have significantly advanced the field of NLP. Their ability to represent semantic relationships in a continuous vector space has made them essential tools in developing more accurate and context-aware language models. As the field progresses, addressing challenges and combining these techniques with newer models like transformers continues to refine language understanding in machines.

Recurrent Neural Networks (RNN)

Recurrent Neural Networks (RNNs) are a class of neural networks designed to process sequential data, making them well-suited for natural language processing (NLP) tasks. Unlike traditional neural networks, RNNs can maintain a hidden state that captures information about previous inputs in the sequence.

1. Architecture and Operation:

- RNN Cell: The basic building block of an RNN is the recurrent cell, which allows information to persist and be passed from one step of the sequence to the next.

- Hidden State: The hidden state in an RNN serves as a memory that retains information about previous inputs. This recurrent nature enables the network to capture dependencies and context within sequential data.

2. Challenges with Traditional RNNs:

- Vanishing and Exploding Gradient: One of the significant challenges faced by traditional RNNs is the vanishing and exploding gradient problem. Gradients either become extremely small, causing the model to lose information, or excessively large, leading to unstable training.

- Long-Term Dependency: Traditional RNNs struggle to capture long-term dependencies in sequences due to the diminishing impact of earlier inputs on the hidden state.

3. LSTM (Long Short-Term Memory) and GRU (Gated Recurrent Unit):

- LSTM is an extension of RNNs designed to overcome the vanishing gradient problem. It introduces a more complex cell structure with gates that control the flow of information, allowing for better retention and utilization of long-term dependencies.

- GRU: Similar to LSTM, Gated Recurrent Units (GRUs) are designed to address the vanishing gradient problem. GRUs have a more straightforward structure than LSTMs, with fewer parameters, making them computationally more efficient.

4. Applications of RNNs in NLP:

- Text Generation: RNNs generate human-like text, making them valuable for applications like creative writing, dialogue systems, and content creation.

- Machine Translation: RNNs have played a crucial role in machine translation tasks, where understanding the context of a sentence is essential for accurate translation.

- Sentiment Analysis: RNNs are applied in sentiment analysis to understand the sentiment expressed in a text by considering the sequence of words.

5. Importance of Context Modeling: RNNs excel at capturing contextual information, allowing them to understand the sequential nature of language. This is particularly valuable in NLP tasks where context plays a vital role, such as understanding a sentence’s meaning or predicting the next word in a sequence.

Recurrent Neural Networks have been fundamental in processing sequential data, especially in natural language. While they face challenges, innovations like LSTM and GRU have significantly improved their effectiveness. As the field progresses, newer architectures, such as transformers, complement and, in some cases, surpass the capabilities of traditional RNNs, marking a continuous evolution in NLP models.

Transformers (BERT, GPT)

Transformers represent a revolutionary architecture in natural language processing (NLP). Introduced by Vaswani et al. in 2017, transformers have become the backbone of state-of-the-art models because they efficiently capture long-range dependencies and contextual information.

Key Concepts of Transformer Architecture

- Attention Mechanism: Transformers leverage attention mechanisms, allowing the model to focus on different parts of the input sequence. Self-attention, in particular, enables each position in the input sequence to consider all other positions, capturing dependencies effectively.

- Multi-Head Attention: Transformers use multiple attention heads, each learning different aspects of the data. This enables the model to capture various types of relationships within the input.

- Positional Encoding: Since transformers do not inherently understand the sequential order of data, positional encodings are added to provide information about the position of each element in the input sequence.

1. BERT (Bidirectional Encoder Representations from Transformers)

Google introduced BERT in 2018 and revolutionized NLP by training models bidirectionally. Unlike traditional models that read text sequentially, BERT considers both left and right context, leading to a more profound understanding of language semantics.

- Pre-training and Fine-tuning: BERT is pre-trained on large corpora and fine-tuned for specific tasks, making it versatile across various NLP applications, including question answering, sentiment analysis, and named entity recognition.

- Masked Language Model (MLM): During pre-training, BERT randomly masks certain words in the input sequence and trains the model to predict these masked words, encouraging it to understand the context and relationships between words.

2. GPT (Generative Pre-trained Transformer)

Developed by OpenAI, GPT employs an unidirectional model for natural language understanding. GPT models, such as GPT-3, are known for their massive scale, with billions of parameters, enabling them to perform a wide range of language tasks.

- Autoregressive Nature: GPT models generate text autoregressively, predicting the next word based on the preceding context. This approach allows GPT to create coherent and contextually relevant text.

- Few-Shot and Zero-Shot Learning: GPT-3 introduced the capability of few-shot and zero-shot learning, where the model can perform tasks with minimal examples or without task-specific training data.

Applications of Transformer Models

- Machine Translation: Transformers have excelled in machine translation tasks because they can effectively capture context and dependencies.

- Question Answering: Models like BERT have shown exceptional performance in question-answering tasks, understanding the context of a question and extracting relevant information from a passage.

- Text Generation: GPT models, with their autoregressive nature, are employed for text generation tasks, including creative writing, content creation, and dialogue systems.

Significance in Context Understanding

The critical strength of transformer models lies in their unparalleled ability to understand context, making them ideal for tasks where a word or phrase’s meaning depends on its surrounding context.

Transformers, exemplified by BERT and GPT, have redefined the landscape of NLP. Their attention mechanisms and bidirectional training enable them to capture complex language structures, making them pivotal in pushing the boundaries of language understanding and generation. As the field evolves, transformers remain at the forefront of cutting-edge NLP research and applications.

Transfer Learning in NLP

Transfer learning has emerged as a game-changer in natural language processing (NLP), allowing models to leverage knowledge gained from one task or domain to improve performance on another. In the context of NLP, transfer learning has become a cornerstone, enabling the development of more robust and efficient language models.

Core Concepts

- Transfer Learning Paradigm: In transfer learning, a model is trained on a source task or domain and then fine-tuned on a target task or domain. This leverages the knowledge acquired during the initial training to enhance performance on the specific task.

- Pre-trained Models: Pre-trained language models, often trained on large datasets, are the foundation for transfer learning. These models capture general language patterns and semantics, providing a rich source of knowledge for downstream tasks.

Universal Sentence Encoder and BERT-based Models

- Universal Sentence Encoder: Google’s Universal Sentence Encoder is designed to generate embeddings for sentences, capturing their semantic meanings. It excels in tasks like semantic similarity and sentiment analysis, offering a universal representation of sentences.

- BERT (Bidirectional Encoder Representations from Transformers): BERT, a pioneer in transfer learning for NLP, is pre-trained on massive corpora, capturing bidirectional context understanding. Fine-tuning BERT for specific tasks yields impressive results in question answering, text classification, and named entity recognition.

Applications and Benefits

- Improved Performance with Limited Data: Transfer learning allows models to perform well despite limited task-specific data. Pre-trained models bring a wealth of linguistic knowledge, reducing the need for extensive task-specific datasets.

- Domain Adaptation: Transfer learning facilitates adaptation to specific domains. Pre-training on a general domain and fine-tuning on a specialized domain ensures that the model can grasp the intricacies and nuances unique to that domain.

- Reduced Training Time and Resources: By utilizing pre-trained models, transfer learning significantly reduces the time and computational resources required to train effective NLP models. This makes it more accessible for researchers and practitioners.

Challenges and Considerations

- Domain Mismatch: Transfer learning may face challenges if the source and target domains differ significantly. Adapting to domain-specific nuances is crucial for optimal performance.

- Task Relevance: The success of transfer learning relies on the relevance of the source task to the target task. Ensuring that the knowledge gained is applicable and beneficial is essential.

Future Trends

- Continued Advancements in Pre-training: Ongoing research aims to enhance pre-training methods, exploring ways to capture more nuanced contextual information and domain-specific knowledge.

- Multimodal Transfer Learning: Integrating transfer learning across multiple modalities, such as text and images, is an emerging trend. This approach aims to create models that understand and generate content across diverse formats.

Transfer learning has revolutionized NLP by providing a robust framework for leveraging pre-existing knowledge. The ability to transfer learned representations from one context to another has boosted performance and paved the way for more efficient and resource-conscious NLP applications. As research continues, transfer learning remains a focal point in advancing the capabilities of language models.

Conclusion

In the ever-evolving landscape of Natural Language Processing (NLP), the array of tools available serves as the bedrock for transformative advancements in language understanding and interaction. NLP tools, spanning from tokenization and part-of-speech tagging to sentiment analysis and machine translation, have not only streamlined the process of linguistic analysis but have also unlocked the potential for machines to comprehend and generate human-like text.

As we traverse the diverse functionalities of these tools, it becomes evident that NLP is no longer confined to theoretical possibilities but has permeated real-world applications. Sentiment analysis tools decipher the nuanced emotions embedded in the text, machine translation tools bridge linguistic gaps, and chatbot development tools usher in a new era of conversational interfaces.

Moreover, the continuous evolution of NLP tools is fueled by open-source frameworks and community-driven development, making these tools accessible to researchers, developers, and businesses alike. The democratization of NLP empowers innovators to push the boundaries of what is possible, fostering an environment of collaboration and rapid progress.

However, challenges persist, ranging from addressing bias in models to navigating the complexities of multilingual and contextual understanding. As we strive for more inclusive, ethical, and context-aware NLP solutions, the tools we employ become not just instruments of analysis but agents of societal impact.

In conclusion, the realm of NLP tools encapsulates the dynamic synergy between linguistic expertise and technological innovation. With their diverse capabilities, these tools form the building blocks of applications that touch our daily lives, from enhancing search engines to facilitating seamless communication with virtual assistants. As we look ahead, the trajectory of NLP tools promises refinement in existing functionalities and the emergence of novel applications that will redefine our relationship with language and technology. The journey continues, and the narrative of NLP unfolds with each discovery, pushing the boundaries of what was once deemed impossible.

0 Comments